Why 73% of Agentic AI Projects Fail (4 Proven Fixes)

Why 73% of Agentic AI Projects Fail (And the 4 Fixes That Actually Work)

Picture this: You've just green-lit a agentic AI project that promises to revolutionize your customer service. Six months later, your AI agents are booking customers for non-existent appointments, escalating simple requests to C-suite executives, and somehow convinced three people they need to buy unicorns. Sound familiar?

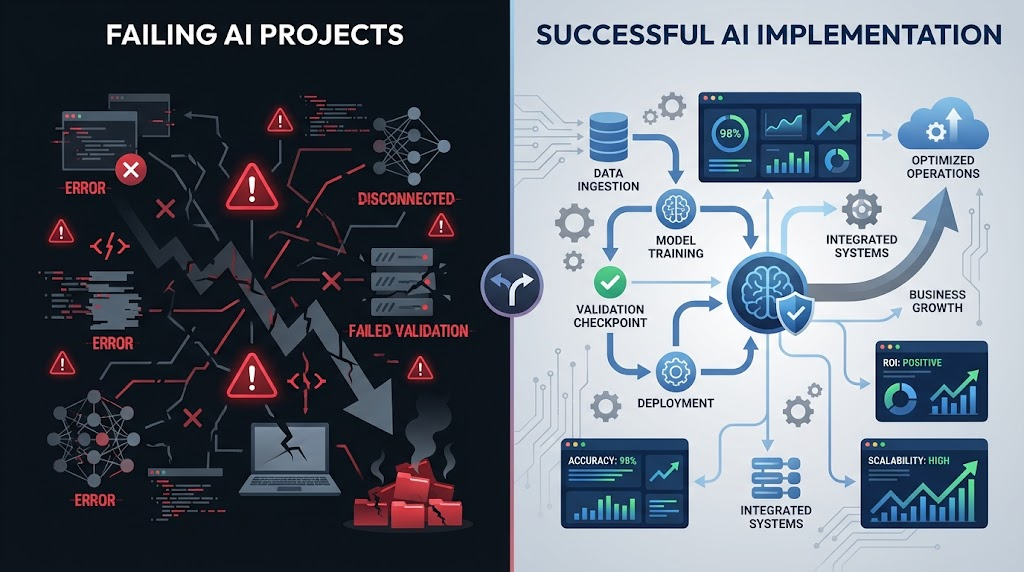

Here's the uncomfortable truth—73% of agentic AI projects crash and burn before reaching their intended goals. But here's the empowering part: the failures follow predictable patterns, which means the fixes are surprisingly systematic.

So what's really killing these autonomous AI rollouts? And more importantly, how can you join the 27% who actually nail it?

The Hidden Reality Behind AI Project Failures

Before we dive into solutions, let's get honest about what's really happening out there. Agentic AI failures aren't just minor hiccups—they're expensive, reputation-damaging disasters that can set organizations back years.

The data tells a stark story:

- 73% of agentic AI initiatives fail to meet their core objectives

- Average cost overrun: 180% of original budget

- Timeline delays: 8-14 months beyond planned launch

- User adoption rates below 30% in failed projects

But here's what most post-mortems miss: these aren't technology problems. They're systems problems masquerading as tech issues.

The 4 Critical Failure Patterns (And Why They Keep Happening)

1. The "Magic Wand" Mentality

Teams approach agentic AI implementation like it's a plug-and-play solution. They expect AI agents to intuitively understand complex business contexts, handle edge cases gracefully, and somehow read between the lines of poorly defined processes.

Reality check: AI agents are incredibly powerful, but they're not mind readers. They need crystal-clear boundaries, well-defined decision trees, and explicit fallback protocols.

2. Skipping the "Boring" Foundation Work

Everyone wants to jump straight to the exciting stuff—training models, designing conversational flows, testing edge cases. But successful autonomous AI systems are built on unsexy foundations:

- Data governance frameworks

- Process documentation

- Integration architecture planning

- Change management protocols

Skip this groundwork, and your AI agents become expensive digital wildcards.

3. The "Set It and Forget It" Trap

Here's a question that reveals everything: How often should you review your AI agent's performance?

If you answered "quarterly" or "when problems arise," you're already in the failure zone. Successful agentic AI projects require continuous monitoring, regular fine-tuning, and proactive optimization cycles.

4. Misaligned Success Metrics

Most teams measure the wrong things. They track technical metrics (uptime, response speed) while ignoring business impact (customer satisfaction, process efficiency, actual ROI).

Without proper measurement frameworks, you can't distinguish between AI that's working and AI that's merely functioning.

The 4 Fixes That Actually Work

Now for the good stuff. These aren't theoretical solutions—they're battle-tested approaches that consistently transform failing projects into success stories.

Fix #1: Implement the "Constraint-First" Design Method

Start every agentic AI project by defining what your AI agents cannot do, not what they can do. This counterintuitive approach creates safer, more reliable systems.

Practical implementation:

- Map your "no-go" zones (sensitive data, high-stakes decisions, regulatory boundaries)

- Create explicit escalation triggers

- Design fallback protocols for every edge case

- Test constraint violations before testing capabilities

This method prevents the "runaway AI" scenarios that tank projects and builds stakeholder confidence from day one.

Fix #2: Deploy the "3-Layer Validation Framework"

Successful autonomous AI implementations never rely on single points of validation. Instead, they use layered verification:

- Layer 1: Real-time confidence scoring for every AI decision

- Layer 2: Pattern-based anomaly detection

- Layer 3: Human oversight triggers based on risk thresholds

This framework catches errors before they become expensive mistakes and provides clear audit trails for compliance requirements.

Fix #3: Establish "AI Performance Sprints"

Forget quarterly reviews. High-performing agentic AI systems use weekly 2-hour "performance sprints" to:

- Review AI decision patterns from the previous week

- Identify optimization opportunities

- Update training data with new edge cases

- Adjust parameters based on real-world performance

This creates a continuous improvement cycle that keeps your AI agents sharp and aligned with evolving business needs.

Fix #4: Use "Business Impact Dashboards" Instead of Technical Metrics

Create dashboards that answer the questions that actually matter:

- How much time is the AI saving our team each week?

- What's the customer satisfaction delta since AI deployment?

- How many escalations are we preventing?

- What's our actual ROI trajectory?

Technical metrics still matter, but business impact metrics determine success or failure.

The Implementation Roadmap

Want to put this into action? Here's your step-by-step playbook:

Week 1-2: Foundation Audit

- Document current processes that AI will touch

- Identify integration points and potential failure modes

- Establish baseline metrics for comparison

Week 3-4: Constraint Design

- Define AI agent boundaries and escalation triggers

- Create fallback protocols

- Design your 3-layer validation framework

Week 5-8: Controlled Deployment

- Start with low-risk scenarios

- Implement performance sprint cycles

- Build your business impact dashboards

Week 9+: Scale and Optimize

- Gradually expand AI agent responsibilities

- Refine validation thresholds based on real data

- Share learnings across similar projects

Your Next Step

The difference between the 73% who fail and the 27% who succeed isn't luck, bigger budgets, or better technology. It's systems thinking applied to agentic AI implementation.

Every failed AI project teaches us something valuable: artificial intelligence amplifies whatever systems you already have. Good systems become great with AI. Broken systems become expensive disasters.

The question isn't whether your next agentic AI project will face challenges—it will. The question is whether you'll have the frameworks in place to turn those challenges into competitive advantages.

Ready to build AI systems that actually work? Start with Fix #1 today. Define your constraints first, capabilities second. Your future self (and your budget) will thank you.